Generative AI Use Is Growing – Along With Concerns About Bias

While the technology is not new, the popularity and mainstream usage of generative AI services has expanded exponentially in 2023, and interest in the technology continues to grow. In fact, Fortune Business Insights forecasts global market growth of generative AI technology to increase from USD $43.87 billion in 2023 to USD $667.96 billion by 2030.

To gain insights into user experiences with generative AI services, Applause surveyed more than 3,144 digital quality testing professionals globally about their use of the technology. The survey queried respondents on a number of topics including bias, hallucinations, workplace support, quality of generated content, and privacy concerns.

Among respondents:

-

59% said their workplaces allow generative AI use

-

79% said they are actively using a generative AI service

-

Top four services used are Bing, Bard, ChatGPT and GitHub Copilot

-

Of the active group 90% are using ChatGPT

Reasons cited for using the technology ranged from work-related tasks, like writing software code, doing research, or creating proposals, to more creative pursuits like writing song lyrics or making electronic dance music.

However, despite the obvious popularity of the technology, it is clear that there is room for improvement.

Bias

A major concern with AI-fueled technology is inherent bias, due to poor quality or insufficient data used to train the algorithm, or resulting from biased decisions around how the data is to be applied.

When asked about their level of concern regarding bias affecting the accuracy, relevance, or tone of AI-generated content, 90% of the participants expressed some level of concern, which is a 24% increase from the AI and Voice Applications Survey Applause conducted in March 2023, where respondents were asked the same question.

Also regarding bias:

-

47% said they experienced responses or content they consider to be biased

-

18% said they have received offensive responses

Hallucinations

Hallucinations in AI are defined as confident responses that do not seem to be justified by the training data.

When asked about their encounters with hallucinations in AI generated content:

-

37% said they have seen examples of hallucinations in AI responses

-

88% expressed concerned about using the technology for software development because of the potential for hallucinations

Correctness and relevance of responses

Nearly 50% of the respondents said the AI-generated responses they received were relevant and appropriate most of the time; 23% said they were correct about half of the time.

The most frequent problems respondents encountered when using the technology included:

-

Misunderstood my prompt

-

Gave a convincing but slightly incorrect answer

-

Generated obviously wrong answers

-

Response was irrelevant to me

-

Referenced something that doesn’t exist

Quality of data

When questioned about their level of concern about the quality of data being used to train AI algorithms:

-

91% expressed some level of concern

-

22% said they were extremely concerned

Accessibility and inclusivity

With the European Accessibility Act compliance deadline looming on the horizon, companies will need to assess their digital experiences and take necessary actions to ensure their digital products meet regulatory requirements.

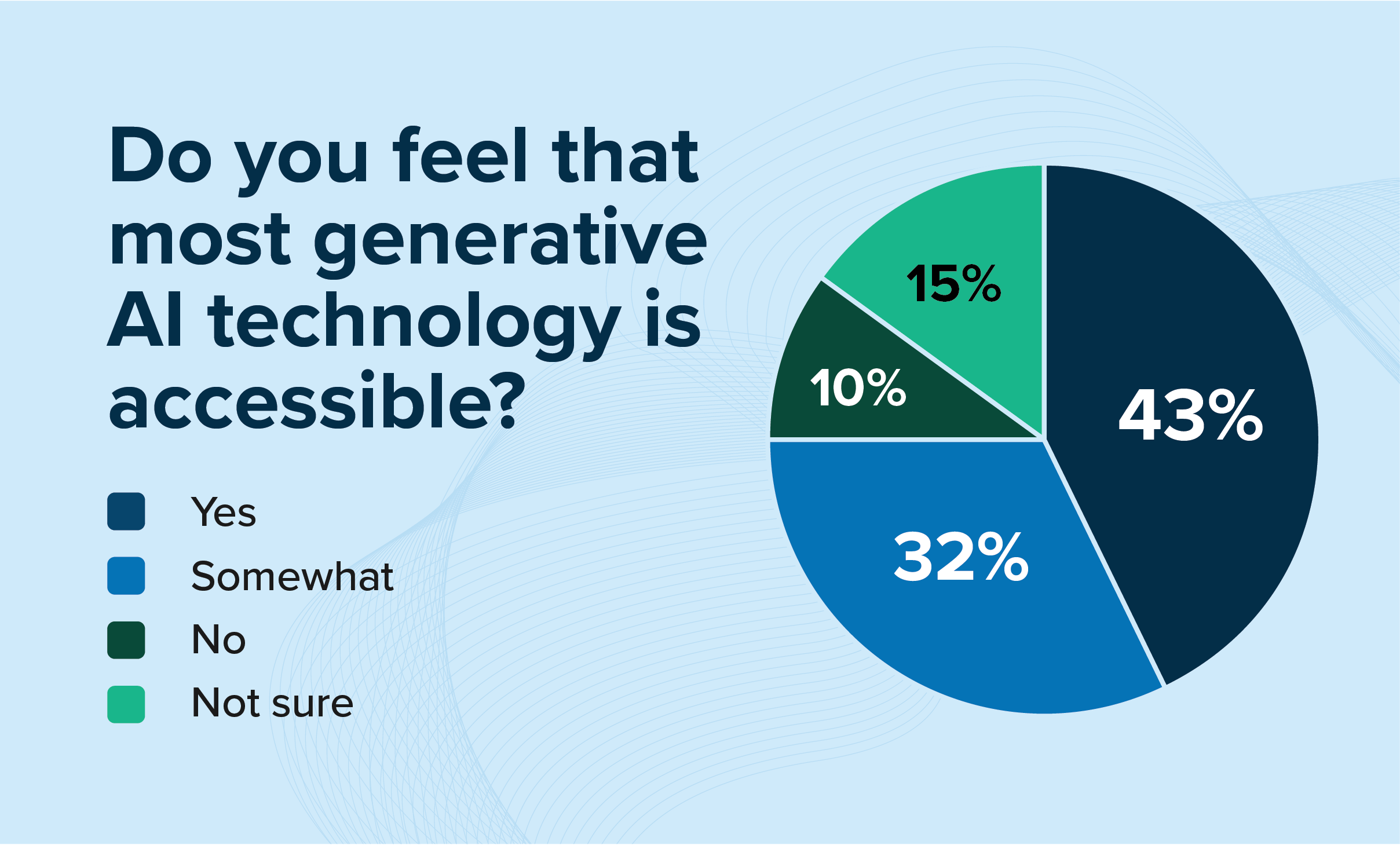

Regarding accessibility, survey participants were asked if generative AI technology is accessible and can be used by people with disabilities, less than half said “yes” (43%).

When asked if generative AI applications serve a diverse enough audience (age, gender, nationality, ability, etc.):

-

42% said yes

-

50% said somewhat, but could be improved

-

10% said no.

Data privacy concerns

Data privacy is a concern with generative AI and has been the topic of numerous legal actions this year. Among survey respondents:

-

98% said that data privacy should be considered when developing new technologies

-

67% feel that most generative AI services infringe on data privacy

Copyright infringement concern

While laws around copyright infringement regarding AI-generated content are still being established, several legal actions have already been brought against companies developing generative AI solutions.

When asked their level of concern that content produced using generative AI could be in breach of copyright or intellectual property protections, respondents said they were:

-

Extremely concerned – 21%

-

Concerned – 41%

-

Slightly concerned – 29%

-

Not at all concerned – 9%

Overall satisfaction with chatbots

To gauge the effect of generative AI on sentiment around chatbot technology, the August survey repeated the question, “How satisfied are you with the effectiveness of chatbots?” from the survey conducted in March.

Indicating a rather significant positive change in sentiment in a short period of time, this survey saw a 30% reduction in dissatisfaction with chatbots versus the survey in March.

Testing with real people lends insights for improvement

While the potential regarding generative AI seems endless, there are also necessary obstacles to overcome. As companies continue to inject generative AI technologies into their digital experiences, testing the outputs with real people is essential to getting useful feedback on the nuanced, hyper-personalized responses users expect. This will help improve the algorithm’s learning and performance over time, and will help address examples of bias, poor content, and inaccessibility in future encounters.

Webinars

Testing Generative AI Applications

Join Applause & Voicebot.ai to discuss the risks, challenges, and testing strategies for safely deploying generative AI applications.