Silos, Redundancy and Other Time Wasters: A Case for Integrated Functional Testing

Some things just belong together. Dogs and frisbees, C-3PO and R2-D2. When it comes to the world of digital product development and product testing, the same applies. Yet so many software development organizations still work in silos. Take manual and automated functional testing as an example. Keeping these two essential processes apart is simply counterproductive.

Segregating manual and automated functional testing creates gaps

Feedback and efficiency suffer to start. When automated and manual test practices operate distinctly, the feedback loop slows and, often, breaks. Teams work in disparate systems to view and manage test results and bug reports. It’s like driving the same car with two different dashboards. Pulling all the results together becomes hard to accurately do – and if it can be done – it takes much more time than it should.

Here are a few other issues with non-integrated functional testing:

-

Effort redundancy and time wastes

-

Delayed product delivery

-

Interrupted CI/CD pipeline flow

-

Increased chance of bugs ending up in production

Getting the mix right: neither automated or manual functional testing alone is the answer

In a world where the push to automate in many sectors is constant, our gut may tell us that automation holds the most power in testing environments. Sure, test automation means you can assess a broader product functionality and test across multiple platforms easier. This adds up to more reliability, consistency, faster ability to scale and faster development schedules, but automation by itself isn’t the silver bullet. It’s often best when used for unit and backend testing.

A good automated smoke suite will assure the team that there is good coverage and confidence around the primary workflows of a product. I’ve seen time wasted by involving manual testers on a build where basic functionality – such as login – doesn’t work. Automation catches that up front. No manual testers need to be involved until later in the process. The key point is that structured automated tests create coverage and confidence, they don’t necessarily catch bugs.

Manual testing, on the other hand, excels in the area of UI and UAT tests. That’s because testing teams can home in on users that meet their ideal demographics, such as testing a UI in a specific cultural context or a specific age group. These manual efforts best exploit difficult-to-predict customer actions – insights from observing these actions inform future testing strategies and development. Good structured manual testers are also great at finding things that automation typically misses, like poor image quality, typos, or bad translations.

Our manual testers are also good at catching bugs that users would run into that automation may not always think of – canceling out of certain user flows early, adding extra options, etc. When we find bugs recurring regularly, we add those to our structured regression testing suite.

The bottom line is that you need both of these testing strategies to capture efficiencies and the full spectrum of insights from your development process.

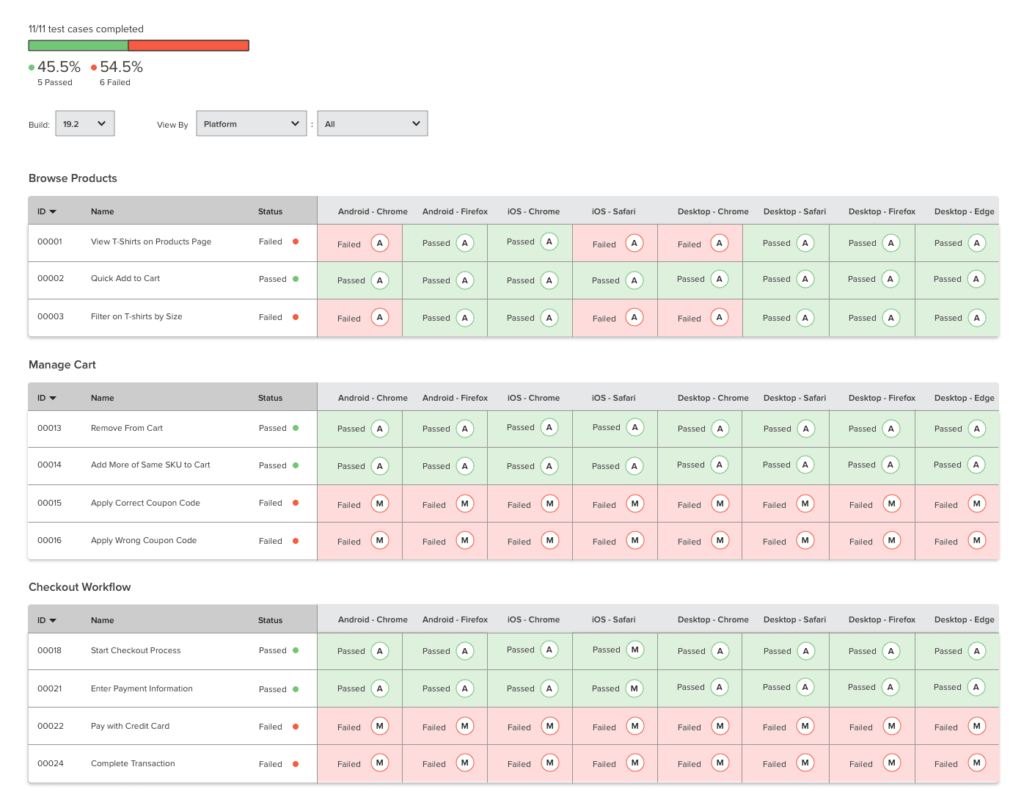

View of automated and manual testing dashboard (A = automated, M = manual)

Architecting the blended approach

We don’t have to choose between one method and another. We can leverage each, with its inherent advantages, to yield a holistic and unified testing method that, like so many things in life, gets its strength as a composite.

Applause’s Integrated Functional Testing combines a real-world crowdsourced approach for manual testing — through both scripted and exploratory methods — with an automation framework that extends open source technologies and gives access to Applause’s device cloud and test case management system. The framework integrates seamlessly into an enterprise CI/CD pipeline. Together, these ensure an accelerated release schedule and the highest-quality QA process.

Understanding use cases for manual and automated tests

With Integrated Functional Testing, manual and automated testing work in concert, each deployed where it makes the most sense:

-

Manually testing new features that don’t yet have automated test scripts written

-

Manually running test cases that require human intelligence and/or are too difficult to automate

-

Automating difficult scenarios that are too tedious for manual testing

-

Validating false failures in automation results via manual testing if/when needed

-

Testing on rare devices that aren’t available in a device cloud, but can be sourced via the Applause community

-

Maintaining coverage via manual testing while an automation suite gets up and running

-

Using exploratory bugs to enhance automation test cases

Supporting both QA and product teams via integrated functional testing

There are many advantages of integrated functional testing for QA and product teams alike. QA will get a better sense of quality and reduce the number of defects that make their way into production. Product teams will improve their understanding of the overall health of any specific product feature or functionality, helping them better evaluate the risks of releasing specific features or builds.

Whitepapers

Integrated Functional Testing in a QA strategy

Test automation has its benefits and limitations. With Integrated Functional Testing, you get the best of both worlds: manual testing expertise and automation for simpler test cases.